Gemma 4 in LM Studio, tested in a real local workflow.

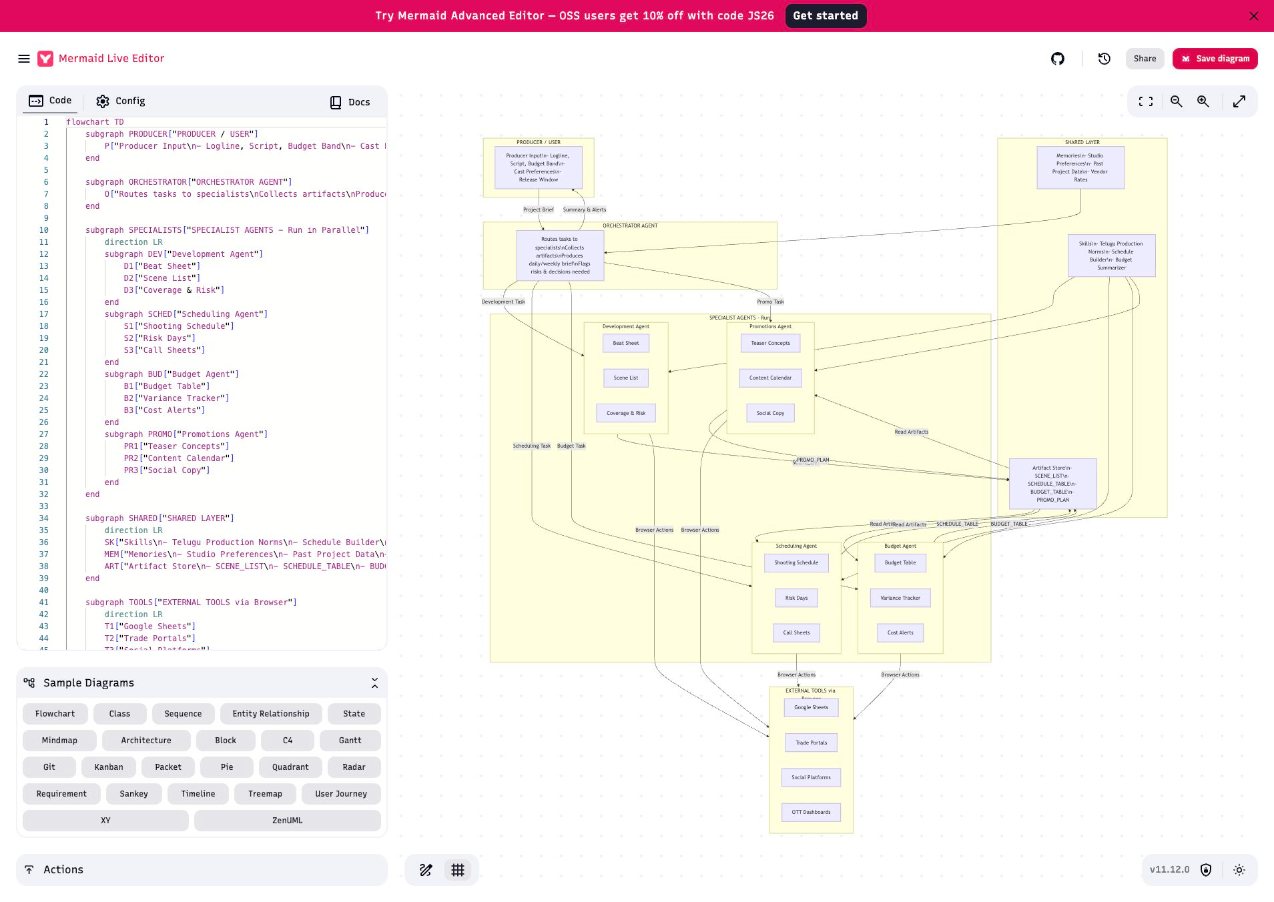

This companion page distills a hands-on benchmark of google/gemma-4-26b-a4b running in LM Studio on an Apple M4 Mac mini. The goal was not to repeat model claims, but to test what actually held up under local API-driven use: reasoning, coding, JSON, long-context recall, tool use, multilingual output, and vision.

This was a quantized local deployment, so the results reflect this specific LM Studio runtime, model build, and hardware envelope rather than the absolute ceiling of Gemma 4 across other serving stacks or quantizations.

At A Glance

If you only need the short version, it is this: Gemma was good enough to be useful for text-heavy local work, but not trustworthy enough in this session for strict automation or long-context completion.

Reasoning, debugging, coding, multilingual drafting, and practical business comparisons were the strongest parts of the run.

Strict JSON-only output, end-to-end tool completion, and long-context finalization all failed in practical workflow terms.

The runtime often spent a large part of the token budget in hidden reasoning before visible content appeared, which drove latency and truncation.

What Gemma 4 Is About

Google positions Gemma 4 as a family focused on reasoning, coding, multilingual support, long context, multimodality, and agentic workflows. This benchmark mapped those claims into local LM Studio tests and then evaluated the outcomes against real saved outputs instead of subjective impressions.

Reasoning

Operational bottlenecks, prioritization, and structured logic were among the strongest areas in the session.

Coding + Debugging

The model produced usable Python and correctly fixed a sliding-window bug, which makes it relevant for local dev assistance.

Structured Output

The semantic classification quality was fine, but strict machine-readable JSON formatting was not reliable enough.

Multilingual Output

Telugu, Hindi, and formal business English came out strong enough to count as practical rather than decorative.

Agentic Workflows

The model discovered the right tools, but it failed to complete the workflow cleanly, looping instead of finishing.

Multimodal Vision

Vision was usable after image resizing and larger output budgets, with screenshot-style understanding noticeably better than real-photo description.

What We Tested

The session combined text, tool, long-context, and vision tasks. The key distinction was not “can the model answer something smart?” but “does it finish the task in a way that is reliable enough for a local workflow?”

What We Found

The dominant behavior in this session was not model “stupidity.” It was runtime friction. The model often spent a large share of the response budget in hidden reasoning output before visible content appeared. That shaped almost every practical outcome.

Strong Text Utility

For local reasoning, debugging, coding, and operational analysis, the model was clearly usable and often quite good.

Automation Friction

Strict JSON and end-to-end tool workflows exposed that semantic correctness is not enough if the output format or stopping behavior is unstable.

Latency Cost

Even good answers often arrived slowly enough to matter. This setup is usable, but not comfortable for fast iterative loops.

Vision Is Real

The successful screenshot run confirms that multimodal input is available and useful in this setup, especially for UI comprehension.

Vision Needs Tuning

Image resizing and a larger token budget were necessary just to get stable visible outputs after hidden reasoning consumed the budget.

Practical Bottom Line

This is a good local text assistant in the tested environment, but not yet a reliable automation engine.

Vision Samples

Two image types were tested because they stress different aspects of local multimodal capability: screenshot/UI comprehension and real-photo scene grounding.

Companion Files

The repo includes the benchmark spec, the evaluator harness, the concise summary, and the raw saved outputs that support the conclusions on this page.